For businesses that with deal petabyte-scale data environments representing billions of individual items such as transactions or material components, the capacity to rapidly analyze that data is vital. Storing data in an on-premise warehouse is an increasingly unpopular option due to the obvious hardware costs and performance challenges. Cloud-based data warehouses have helped enterprises navigate these issues. Most Chief Data Officers at businesses with vast repositories of data are planning to move data to the cloud. Despite competition from large established organizations like Amazon, Google, and Microsoft, Snowflake has emerged as a popular choice with these stakeholders. The company has reached 1000 customers and just completed a $450 million dollar funding round that values the company at 3.5 Billion dollars, despite only emerging from stealth mode in 2014.

Snowflake is a cloud-based solution that is, in a word, fast. Snowflake’s speed is largely due to its singular multi-cluster architecture. In a traditional data warehouse, storage, compute, and database services are wound together tightly. This can stem from either the configuration of the physical nodes or the architecture of the data warehouse appliance. Even “big data” platforms tie storage, compute, and services tightly together within the same nodes. Big data platforms can scale compute and storage to some degree, but they suffer from performance limitations as the number of workloads and users increase. Snowflake, however, is built with patented multi-cluster technology, meaning its customers get all of the attributes of existing solutions, but in a more nimble framework. This architecture enables automatic scaling of storage, analytics, and workgroup resources, resulting in improved performance that is typically manifested in fast query response times.

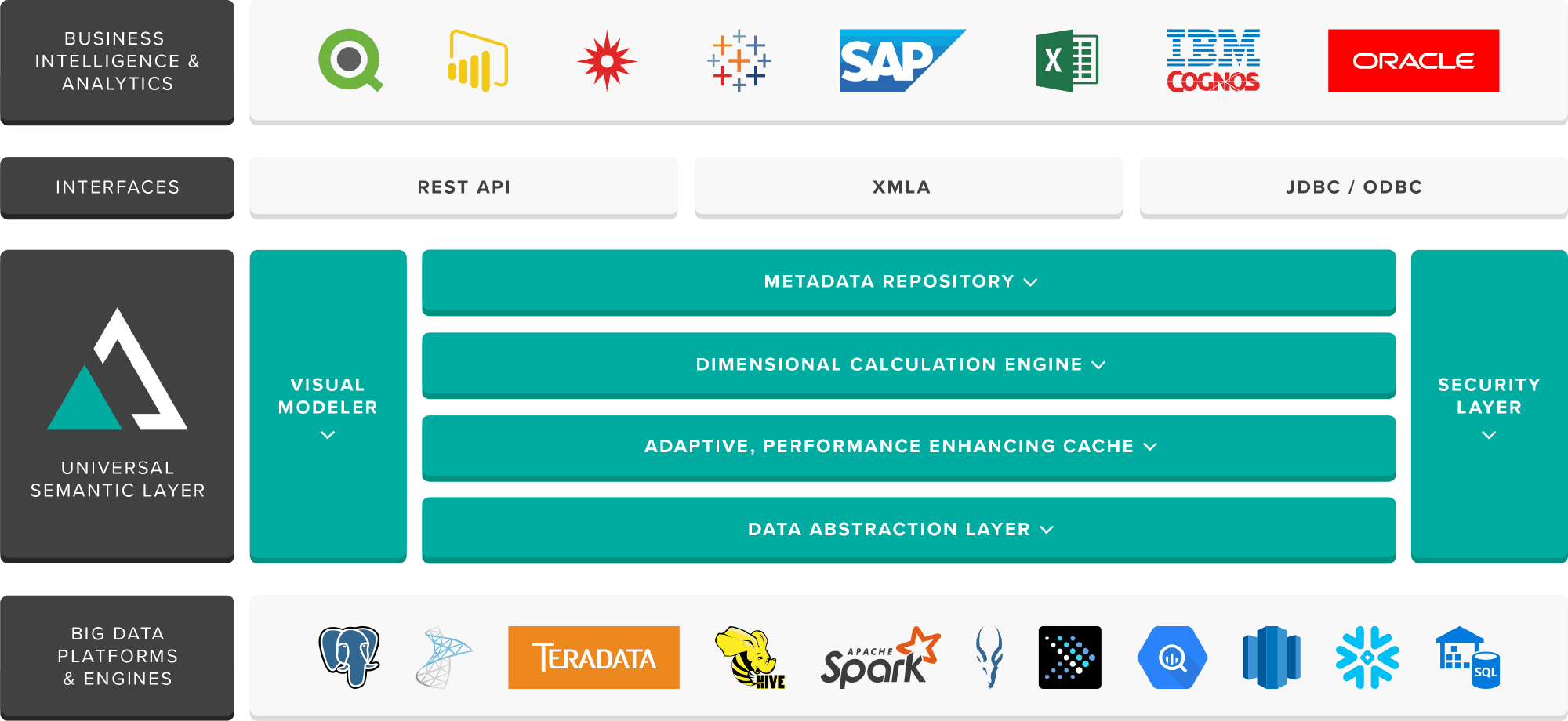

Snowflake supports multiple use cases — from the relatively simple scenario of providing faster reporting to business users to more complex cases like providing a data as a service environment for an organization’s customers. Regardless of the specific business need, the people who need data in large companies — those nimble-decision makers, those number crunchers, those brave analytics-miners who run reports on Excel or Tableau — have to be able to talk to where the data is stored. While Snowflake’s pay-as-you-go pricing structure allows organizations to spend far less accessing stored data in Snowflake than they would in a traditional environment, costs can still rapidly increase as the amount of data grows. Even in a cloud environment like Snowflake, delivering interactive performance while keeping costs low remains a challenge. That’s where AtScale comes in.

Using machine learning, AtScale’s data analytics fabric is able to minimize the number of rows that a query has to scan in order to generate a report. AtScale does this by building aggregate tables of data. Once data is contained in these aggregate tables, the raw data does not need to be subsequently accessed from storage when it is queried. The aggregation of the data is at the level of one or more dimensional attributes, or, if no dimensional attributes are included, the aggregated data is a total of the values for the included measures.

AtScale’s aggregate tables further improve the two primary use cases for choosing a cloud data warehouses like Snowflake — increased performance and reduced cost. With AtScale’s aggregate tables, the length of time needed to generate a report is reduced. Ordinarily, rewriting a data tool can take weeks or months, but with aggregate tables, these processes are only done once. As a result, subsequent retrievals become very fast; combining AtScale with Snowflake’s inherent speed can reduce the time it takes to generate a monthly report from 2-3 days to 10 minutes. With this faster time to insight, enterprises will be able to efficiently respond to critical businesses questions where they were previously delayed from taking action due to waiting for a report.

In addition to increasing the performance of business intelligence on Snowflake, AtScale’s technology also reduces the total cost of ownership of a cloud data warehouse such as Snowflake. Cloud data warehouses are priced on a pay per usage model, which in Snowflake’s case is represented by credits. Since AtScale’s use of aggregate tables replaces the need to access raw data with every query, using AtScale in conjunction with Snowflake reduces the amount of credits an enterprise needs to purchase.

Send an email to sales@atscale.com to learn more about how AtScale and Snowflake work together.

SHARE

Power BI/Fabric Benchmarks