Enterprise data management has changed immensely over the past few decades…we’ve lived through data warehousing and data marts, struggled with scaling to huge data volumes and slow query performance, so on and so forth. As time progressed, true Big Data platforms like Hadoop emerged…and are now joined by Amazon Redshift, Google BigQuery, and others. In this age of limitless scalability of data volume, the “data lake” was born.

The promise of these platforms is enticing – store all your data, forever. Instead of pruning the data to fit into available data repositories, it became possible to simply store everything. Data in the lake is typically imported in its natural state – in its source format – and then usually some degree of ETL (or ELT, depending on how you look at it) to make the data palatable for reporting and analysis.

If there’s one common success across organizations and their data lakes, it’s the ability to store vast amounts of data – including lots of data that used to be lost. And if there’s one common challenge, it’s that when you try to run BI tools for reporting and analysis against the data lake, the performance is really slow – and/or there’s just simply too much data there to process. The queries that users need to run in order to perform their analytics might take several minutes…or hours. Or maybe run for an hour only to get no results, because the sheer scale of the data was just too big for the tool to deal with.

One typical response to this pervasive issue is for users to create their own extracts of data out of the lake – they’ll use whatever given tool is available to run that hour-long query, then export the data and use it in Excel for example – or maybe make a .tde file in Tableau to hold the data, without having to re-run that query again later. Perhaps at first glance this seems like a reasonable solution – and it’s a fine example of the age-old tendency of users to find workarounds for the things that stand in the way of them getting their jobs done. But it actually introduces a number of headaches…

First of all, if we’re going with the metaphor of a “data lake” to begin with, essentially what these users are doing is creating a bunch of puddles. Little puddles of data, manageable in size, that have been extracted from the data lake for their own re-use (and probably continued re-use by their colleagues). The data lake itself, while a massive collection of potentially every piece of data the company has, is a managed and governed repository…there are proper access controls and security protocols for example, ensuring that users can only get to data that they should have access to. There’s processes around getting data into the data lake – keeping it stocked with fresh, accurate data. There may be a Center of Excellence or similar organization managing the BI tools that are in use against the data lake as well – ensuring that valid metadata is created and maintained for each tool, safeguarding against poorly-formed queries and invalid query results. But what’s happening with all these puddles?

A puddle of data that has been extracted from the data lake is no longer being managed and updated with new data as time goes on – so how current is this data? For that matter, when the data was extracted, was there anything done (like in the query tool used to do the extraction) that could potentially have gone awry – like an invalid table join or incorrect calculation? Who’s responsible for the accuracy and validity of the data in this puddle? And when that puddle is out of the lake – maybe it’s a .csv, or a .xls, or a .tde, etc. – what kind of security is there on that data? Who’s getting access to it, and who’s responsible for enforcing security controls on that data?

On top of those important questions about the information in one of these puddles, you also have to keep in mind that by definition, these are going to be pretty small selections of data. There’s only so much data volume that can be managed in these puddles by whatever tool they’ve been created for – so as an additional side-effect to having pulled that data out of the lake and storing it elsewhere, the user also loses access to anything other than that small set of data while doing their analysis. They won’t have the benefit of the greater context of the full data set, and may just miss out on some important information simply because the data isn’t there. So really, the issues with these puddles of data can basically be categorized into three separate concerns – governance, validity, and scale. Each one of those is something that should be closely paid attention to, because they all have serious ramifications for the organization.

To a frustrated user, simply trying to do their job with the data they need in order to do it, fishing data out of the lake and making their own puddles is a workaround that enables them to be more effective or efficient. It takes away at least some of the aggravation of having to sit and wait for needed queries to execute when they really should be investigating the results of that query and using that data to inform their critical business decisions. But the flipside of this workaround can become a nightmare for IT and operations…untold numbers of data puddles all over the organization, not stored in any managed repository but rather sitting around on individual desktops, network shares, maybe even a USB memory stick. No governance or control over that data…where it is or who has access to it. No idea if decisions are being made on stale or incorrect information. No visibility to what was done during or after the extraction with that data.

Naturally you might imagine a new internal policy being issued that “outlaws” making any data extracts. Citing the reasons above around governance and security and data integrity, you can make a case that people just shouldn’t do those things – but that’s avoiding the issue, not solving it. The issue isn’t that people are sloshing data out of the lake into puddles, it’s that they don’t see a reasonable way to get their jobs done unless they do.

The proper way to go about mopping up those puddles, and ensuring they don’t get rehydrated again later, is to address the underlying issue – painfully-long query execution times and/or data volumes that just can’t be processed effectively. The traditional ways to approach that would include things like adding nodes to your Hadoop cluster, or doing diligent analysis of your user’s queries and designing, implementing, and maintaining ETL processes to make summaries of that data ahead of time…which in and of itself is time-consuming and expensive, requires a lot of new care and feeding, and introduces additional latency between when the data is created and when the users can get to it. Adding raw horsepower with more hardware is a brute-force approach that adds a lot of cost and ultimately is unlikely to succeed on its own – you’ll almost certainly have to add in some ETL processes too, which introduces weeks-long analysis, development, testing, and deployment for each aggregate you want to make. With enough brute force (hardware) and data wrangling (ETL), you can ultimately get to where you need to be for a given report or dashboard. But once you get that dashboard solved, there will be another one…and another one…and, well, you get the idea.

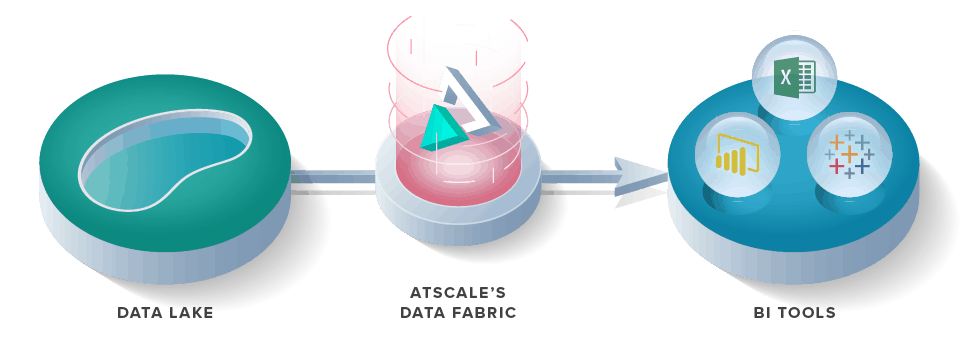

AtScale introduces a novel way, and indeed the most effective way, to eliminate the puddles from your data real estate. With AtScale, your users’ behavior automatically shapes the data – AtScale watches and learns from your users as they work with their BI tools…it responds automatically, creating any and all aggregates that would best support the BI activities they are undertaking via machine learning. AtScale’s Adaptive Cache is the collective name for these aggregates, created in response to user behavior and constantly adapted to their changing needs. AtScale automatically gives the users the optimal set of aggregates they need in order to achieve conversational response time with their BI tools – no more minutes-long (or hours-long) queries; most queries against AtScale’s Adaptive Cache are sub second. Users can freely interrogate and explore their data sets without constraint, and without the constant interruption of their analytical processes by unbearably long-running queries. And then, because they have the performance they need on the entire scope of data that is available on the data lake, they no longer have the need to create their own puddles. They can use their BI tools with a live, direct connection and always have the freshest data available, with centralized governance, security, and control as provided by the AtScale Intelligent Data Fabric – all your BI tools coordinated together with a single foundation that ensures that everybody, everywhere is getting fast, accurate results with the requisite security and control that is needed in your organization. Access to all the data, all the time. No puddles. No additional ETL work. An end to never-ending quests to make the cluster bigger.

Conversational response time — on all the data in the lake…regardless of your BI tools.

With AtScale you can find “Data as a Service” – a state where the data that your users need is available to them, at all times, with any tool, with the performance they want and the controls you need. A single platform to unify all your disparate BI tools while scaling them to the full extent of the data in your lake.

And no more puddles.

SHARE

2026 State of the Semantic Layer