Every decade, someone reinvents the BI-embedded model. Every decade, customers end up migrating off it. The semantic layer is the context layer for AI, and putting it inside one BI tool was the original sin.

Last week, Omni raised a $120 million Series C at a $1.5 billion valuation, led by ICONIQ and built by the team that built Looker. The pitch is the same one Business Objects shipped in 1990: put the semantic layer inside the BI tool, next to the dashboard, close to the user.

That answer was already wrong in 2015, when Looker tried it and ended up acquired by Google for $2.6 billion. It’s much more wrong in 2026. The decision-maker isn’t sitting at a dashboard anymore. The decision-maker is asking a chatbot. Half your users aren’t clicking at all. They’re typing into Claude, Cursor, ChatGPT, or a custom agent that hits the warehouse over MCP and never opens a BI tool.

The foundation can’t live inside one UI when most consumers don’t have a UI. They have an AI that needs context.

Every BI vendor is shipping the same trap

Omni isn’t alone. Every BI vendor is racing to bolt a semantic layer onto its product and call it AI-ready.

Microsoft renamed Power BI datasets to “semantic models” and pitched them as the foundation of Microsoft Fabric’s AI strategy, with definitions that live inside the Power BI engine and speak DAX. Tableau shipped VizQL Data Service so agents can query Tableau’s published data sources, with the catch that the semantics live inside Tableau Server. ThoughtSpot launched Spotter Semantics in March 2026 as an “AI-native semantic foundation” tied to ThoughtSpot’s proprietary query engine. Sigma announced Data Models in late 2024 as its own embedded semantic layer.

The marketing is identical. The architecture is identical. The model is the foundation, the BI tool is the home, the agent is welcome to query as long as it speaks the BI vendor’s API. Same trade Business Objects Universes made in 1990. Same trade LookML made in 2012. The user gets a beautifully modeled semantic layer and a vendor lock-in agreement bundled together.

It works when one tool owns the enterprise. It fails the moment a second consumer shows up.

In 2026, no enterprise has a second consumer…

They have dozens. Engineers using Claude and Cursor for code generation, both calling internal data through MCP. A sales team running ChatGPT Enterprise on the deal pipeline. A CFO chatbot built on OpenAI and a customer-facing copilot built on Anthropic. A LangChain agent product marketing built last Tuesday and a Snowflake Cortex agent the data team built last Friday. Customer support agents inside Zendesk and Intercom asking AI for the customer’s lifetime value while the call is live.

The governance team asks for one number. Twenty tools return twenty. The semantic layer sits inside one of them. The other nineteen consumers are flying blind, and they’re now the majority.

Sigma, to its credit, has started to admit this. In June 2025, Sigma launched native integration with Snowflake Semantic Views, explicitly putting the layer in the warehouse instead of in the BI tool. Sigma’s CEO called it “meeting the semantic layer where it belongs.” That’s the right instinct. It’s also a quiet concession that the BI-embedded model doesn’t survive contact with multi-agent reality.

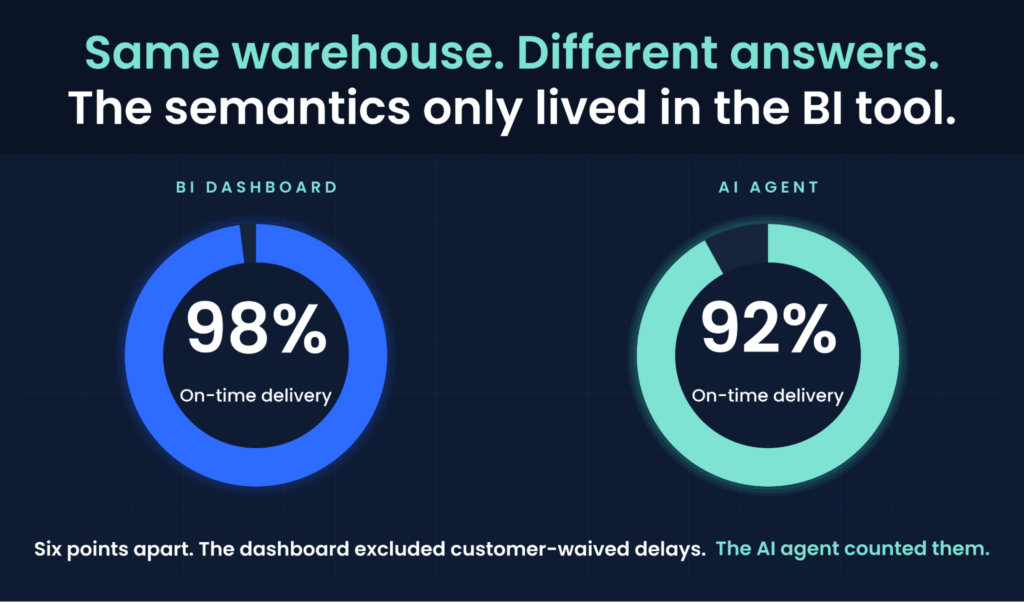

The 6% that broke the AI team

At a global logistics company, the BI dashboard reported 98% on-time delivery. The route-exception agent the supply chain team had built on Claude, querying the same warehouse, reported 92%. Same warehouse, same definition of “truth,” six points apart. Nobody could explain why.

The reason lived inside the BI tool. A filter excluding “customer-waived delays” was defined in the tabular model. The agent had no way to see it because the context wasn’t where the agent could read it. The agent wasn’t wrong. The dashboard wasn’t wrong. The architecture was wrong, because it put the authoritative definition of “on-time,” and the context that defined what “on-time” actually meant, inside a tool that only the BI engine could read.

Six points isn’t a rounding error. It’s the difference between a CFO trusting the AI team and a CFO asking why. And it’s about to multiply, because every enterprise is about to deploy domain agents that query the same warehouse from outside the BI tool, with no access to the context the BI tool kept to itself.

The Looker lesson. Again.

The awkward part of every BI-native semantic pitch is that the industry has run this experiment before. LookML was beautifully modeled and locked to one vendor. Then Google bought Looker, LookML’s roadmap slowed, and customers who’d invested years of metric definitions discovered exactly how portable “portable” wasn’t. The ones who got out did it the expensive way. The ones who stayed are still stuck.

Now Omni is selling LookML’s architecture with a nicer UI, at a $1.5 billion valuation that all but guarantees the next acquirer. ThoughtSpot is selling it with a knowledge graph. Power BI is selling it as part of Fabric. Each one promises this time the lock-in is worth it because the AI integration is special. Each one is wrong for the same reason: the next AI agent your enterprise builds isn’t going to come from the BI vendor.

Every decade, someone reinvents the BI-embedded semantic model and declares it the future. Every decade, the companies that built their metrics that way end up migrating them.

The three tests

Any semantic layer worth the name has to pass three tests. The first two are AI tests now. The third is the one most vendors are still trying to fake.

Test 1: Multi-agent. Can a Claude analytics agent, a Cursor code agent, a ChatGPT chat agent, a Snowflake Cortex agent, and a custom LangChain agent all query the same governed metric and get the same answer with the same definition of “revenue,” “churn,” and “on-time”? If only one of them works because it’s the one your BI vendor shipped, you don’t have a semantic layer. You have a vendor’s agent strategy.

Test 2: Agent-grade governance. When an agent answers a CFO’s question, can you prove which version of which definition produced the number, who is allowed to see it, and what filters were applied? BI-native semantic models were built for human users staring at a dashboard. Agents query at machine speed across thousands of permission boundaries. A semantic layer that can’t enforce row-level security and metric lineage at agent volume will leak data the day a regulator asks how.

Test 3: Multi-everything-else. The agents have to coexist with the rest of the stack. Tableau and Power BI users on the BI side. Snowflake, BigQuery, and Iceberg on the storage side. Excel on the desktop, Jupyter in the notebook, MCP on the wire. The same metric definition has to work against all of them, on the same day, with no rebuild. BI-native semantic layers pick one warehouse, optimize there, and the rest go to seed.

Three tests. BI-native semantic models fail all three the moment your enterprise deploys its second AI agent. Which it already has. Or it will by the time your CFO asks why the bot got a different number than the dashboard.

Context is what AI needs

A dashboard user knows “revenue” means GAAP revenue because they sit in finance meetings. The agent has no finance meetings. It has whatever the semantic layer hands it. If that layer lives inside one vendor’s BI tool, every agent outside is making things up.

A universal semantic layer sits above the warehouse and below every consumer. Every BI tool, every notebook, every agent, every app. None of them own it. All of them query it. One answer comes back, and it carries the same context with it.

AtScale has shipped that architecture for thirteen years. Open Semantic Interchange, which AtScale is part of alongside Snowflake, Salesforce, and dbt Labs, is the open standard version of it. The list of vendors who agreed to publish their semantic metadata in a neutral format doesn’t include Omni. It doesn’t include Tableau. It doesn’t include Power BI. It doesn’t include the BI-native model. Context only works if every consumer can read it.

The BI-native semantic model was a good idea in 1990. It’s a trap in 2026. Every decade, someone reinvents it. Every decade, customers end up migrating off it. Context is what AI needs, and context only works when it’s open, portable, and yours.

SHARE

Guide: How to Choose a Semantic Layer