In our most recent webinar, Greg Mabrito of Slickdeals and Dave Mariani of AtScale shared how the Slickdeals team delivers analysis-ready data from its Snowflake Data Warehouse running on Amazon Web Services to support business-critical decisions. Couldn’t attend the webinar? In this blog post, we share a brief recap of what you missed!

Slickdeals is a leading social shopping platform that is dedicated to the sharing, rating, and reviewing deals and coupons. They help their users build a community of savvy shoppers who help each other find the best deals on the products that they love. With over 12 million monthly users and over $6.8B saved, the Slickdeals community continues to grow at an accelerated rate. How are they keeping up? Mabrito walks us through their journey, sharing how they have evolved throughout the years and found their way to AtScale.

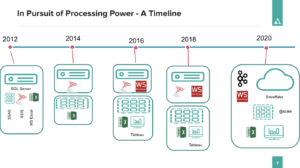

“2020 was a big year of change for a lot of reasons, but here at Slickdeals, we made some major changes within our data data platform.” Greg speaks to his team’s need for a scalable data infrastructure and the seven barriers that his team faced, including:

- Scalable Data Infrastructure

- Semi-Structured Data

- Data movement Constraints

- Loose Coupling

- Agility and Speed

- Self-Service Analytics & Extensibility

- Data Volume Growth

Greg later shares his team’s leap to cloud, sharing where they began with their default ingestion pattern, the changes that were made with their Snowflake integration and their final view with AtScale. Mabrito states “We’re avoiding the egress problem with this hybrid approach because we’ve got AtScale on-prem. It works really nice for us. We’re able to control costs, plus the engineer aggregates help us because we don’t really know what the users are going to ask of the data. We can’t know that across the board for all of our users. So this is very, very helpful for us and it makes us much more productive.”

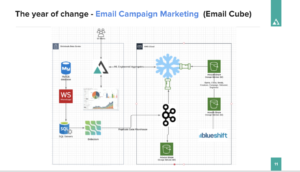

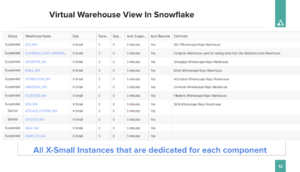

Greg continues to share a behind-the-scenes look at how his team worked with Snowflake to achieve ultimate flexibility and agility. He states, “We have complete decoupling from our presentation layer and AtScale and processing on the back end for the different components, whether it’s load or stage and all of our cube processing. Depending on how many users we bring onto that cube or how active it is, we can spin that up and get more capacity, to really improve the user experience.”

Mabrito then goes into detail on the team’s greatest milestone, their search engine marketing cube. He shares how they can take their data in Snowflake and then issue continuous rebuilds pretty quickly with multiple attribution models, new metrics and functionality. Greg speaks to how this new functionality has improved efficiency throughout the team as, “There’s no more long waits to deploy a model. The ability to deploy and test models quickly really helps the team move faster.”

Mabrito then goes into detail on the team’s greatest milestone, their search engine marketing cube. He shares how they can take their data in Snowflake and then issue continuous rebuilds pretty quickly with multiple attribution models, new metrics and functionality. Greg speaks to how this new functionality has improved efficiency throughout the team as, “There’s no more long waits to deploy a model. The ability to deploy and test models quickly really helps the team move faster.”

With this success, Mabrito and his team are looking forward to at the end of the year and their goal with their Search Engine Marketing Cube and to retire SSAS. How is their Snowflake migration going? Mabrito states “Snowflake migration is in process right now, in dual production mode with SQL server. And so we’re taking our time and refactoring all the pipelines for our event driven architecture so that we can really bring, fresher data in faster, less latent, to meet our needs of business.”

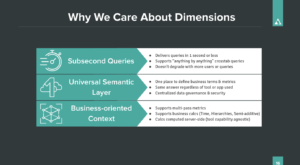

Dave Mariani speaks to what made Greg and his team circling back to SSAS. He speaks to the power of speed, “SSAS is really fast, it is speed of thought fast. In today’s cloud world, a lot of people have gotten used to queries coming back in 10, 20, 30 seconds even minutes. People should be able to get an analyst and be able to ask the next question once they’ve got the answer to the previous one, and that’s really important.” Mariani also speaks to the appeal of a single source of truth (SSOT) created by the a single semantic layer and how it’s “Critical to have everybody speaking the same language and to hide some of that complexity of where the data is.” Dave ends on attribution, reflecting on Greg’s journey and how to turn data into consumable analytics with a dimensional approach.

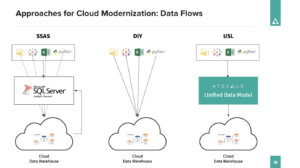

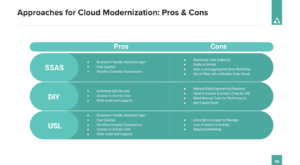

Mariani shares the three approaches for dimensional analytics in the cloud, sharing the pros and cons of each:

- SSAS: Keep SSAS as the dimensional engine loaded from a cloud data warehouse.

- DIY: Abandon dimensional analytics and build reports and dashboards directly on a cloud data warehouse.

- Universal Semantic Layer: Add a dimensional, universal semantic layer on top of your cloud data platform(s).

Mariani emphasizes the benefits of a Universal Semantic Layer as “You can have your cake and eat it too. A universal semantic layer or a unified data model, does sort of the best of both worlds. It gives you direct access to the cloud and the cloud data warehouse allows you to support all those tools, by getting a single view of that data.” He continues, “You’re not restricted by size, you’re not restricted by scale and the data stays here… You want to be able to scale your cloud data warehouse or your data warehouse, and not have to worry about scaling your semantic layer… When moving to the cloud, you don’t have to worry about scale anymore on your data warehouse… You should be demanding the same thing for your business intelligence engine. It shouldn’t have to scale itself. It should scale with your data.”

In this demonstration, Dave uses AtScale’s Universal Semantic Layer, Snowflake and a variety of BI tools to show how data-driven teams can virtualize data leads.

Want to view the full webinar? Catch the on-demand webinar here.

SHARE

Case Study: Vodafone Portugal Modernizes Data Analytics