Many companies face challenges in developing a roadmap for data, insights, and analytics. This blog stresses how an enterprise data strategy is a critical guidepost for articulating an effective set of use cases and a clear roadmap that scales with the impact of data, insights, and analytics. Data is the fuel for addressing key business questions. By connecting business questions to the right data sources, organizations can create a roadmap that delivers increasingly valuable insights as their data capabilities mature — with AtScale accelerating this journey.

These days, the sheer complexity of managing information while preparing for AI-driven analytics feels overwhelming. Organizations juggle data democratization, evolving regulations, and the pressure to extract value from their digital assets. Think of an enterprise data strategy as your North Star — guiding the alignment of data, people, technology, and governance toward concrete business wins and AI readiness.

What Is an Enterprise Data Strategy?

An enterprise data strategy represents your organization’s master plan for managing, governing, and leveraging data to hit business targets while enabling AI-powered insights. This isn’t just another framework — it’s the blueprint that scales analytics, builds data confidence, and transforms AI initiatives from experimental projects into operational powerhouses across your entire organization.

Here’s what makes it tick: your enterprise data strategy creates direct links between data assets and business results. Every data project should push organizational goals forward while staying nimble enough to embrace emerging tech like generative AI, machine learning, and automated decision systems.

Why an Enterprise Data Strategy Matters

As companies move to modern cloud platforms to improve data, insights, and analytics, many realize that the “last mile” of preparing, analyzing, and publishing insights — the data consumption layer for business intelligence — takes too long to create, refresh, and refine. The key to accelerating actionable insights is developing an effective data source strategy. This strategy must establish standards for data acquisition, preparation, integration, and publishing to support business intelligence creation.

The urgency around a robust enterprise data strategy has reached a tipping point. In fact, Gartner predicts that 80% of data strategies will fail without strong governance frameworks.

Today’s organizations need comprehensive data strategies for several critical reasons:

- Compete effectively in markets where data-driven insights create competitive advantages

- Meet compliance obligations across increasingly complex regulatory landscapes

- Enable predictive and generative AI at scale while maintaining data trust and explainability

- Ensure trusted data for AI initiatives that require clean, governed, bias-free datasets

- Accelerate analytics velocity to support real-time decision-making

- Meet compliance requirements while scaling AI innovation across business units

Without strategic thinking, organizations get stuck in data quicksand — isolated information pools, conflicting metrics, and the frustrating inability to harness AI effectively. Meanwhile, competitors who’ve cracked the code on democratizing data access while maintaining governance standards are pulling ahead.

Aligning Data Strategy with Business Objectives

Your enterprise data strategy must forge direct connections to measurable business wins instead of getting lost in technical complexity. Smart organizations tie their data initiatives to specific, quantifiable targets like:

- Improve customer retention through predictive analytics and personalized experiences

- Optimize operational efficiency by identifying bottlenecks and automating workflows

- Reduce costs through better resource allocation and waste reduction

- Enable AI innovation that drives new revenue streams and competitive advantages

The metrics that matter include customer lifetime value improvements, operational cost cuts, faster time-to-insight, and AI model precision rates. Real success happens when you connect business objectives directly to AI and analytics applications: cutting customer churn with predictive models, automating workflows through intelligent systems, sharpening forecast accuracy via machine learning, and delivering personalized customer experiences through real-time data processing.

Assessing Your Current Data Maturity

Before crafting a comprehensive strategy, organizations need to honestly evaluate their data readiness across multiple fronts:

- Data Architecture Readiness for AI means checking whether your existing systems can handle modern AI workloads — real-time processing, unstructured data management, and scalable computing resources.

- System Integration and Silos Audit requires mapping every data source, spotting integration gaps, and documenting how information flows to understand where data gets trapped in departmental corners.

- Analytics Maturity Measurement looks at current capabilities spanning basic reporting to advanced predictive analytics, helping organizations pinpoint their AI launching pad.

- Dark Data Identification matters more than ever since research indicates roughly 90% of enterprise data sits unanalyzed, representing massive untapped potential for AI initiatives and business insights.

- Cultural Readiness and Leadership Alignment assessment checks whether your organization has the change management muscle and executive backing needed for successful data strategy execution.

Core Pillars of a Modern Enterprise Data Strategy

A comprehensive enterprise data strategy builds on six essential pillars working in concert to create a robust, scalable foundation:

- Data Governance and Compliance creates the policies, procedures, and standards ensuring data quality, security, and regulatory compliance while fostering innovation and accessibility.

- Architecture and Integration covers modern approaches, including semantic layers, data mesh architectures, and comprehensive data catalogs providing unified access to distributed data assets.

- Data Quality and Trust centers on implementing automated data validation, lineage tracking, and quality monitoring so AI systems and business users can depend on accurate, consistent information.

- AI/Analytics Enablement handles both structured and unstructured data preparation, ensuring data architecture supports everything from traditional business intelligence to advanced machine learning and generative AI applications.

- Culture and Change Management powers data literacy initiatives, upskilling programs, and organizational transformation to build a genuinely data-driven culture where employees across all functions can effectively leverage data tools and insights.

- Measurement and Continuous Improvement create metrics and feedback loops for continuously optimizing the data strategy, measuring ROI, and adapting to shifting business needs and technological advances.

Data Architecture for Scale and Flexibility

Building modern data architecture feels like constructing a bridge while people are still walking on it. Organizations need systems that grow with exploding data volumes, handle everything from spreadsheets to streaming sensor data, and somehow stay flexible enough for tomorrow’s analytics requirements that haven’t been invented yet.

The truth is, most companies underestimate how quickly their data needs will evolve. What starts as a straightforward reporting project suddenly needs to support real-time dashboards, ML models, and natural language queries from executives who want answers now. This is where architectural decisions made today either become the foundation for future success or the bottleneck that slows everything down.

Here’s what works in practice:

Semantic Layers: Making Data Speak Business Language

Think of semantic layers as the translation service between technical data and business understanding. Instead of forcing every analyst to become a database expert, these layers create a unified business view that abstracts away the messy technical details. When someone asks for “monthly recurring revenue,” they get consistent results whether the data comes from Salesforce, the billing system, or last quarter’s spreadsheet exports.

A well-designed semantic layer strategy becomes that crucial piece that infrastructure teams wish they’d built from day one. It’s the difference between having fifty slightly different versions of “customer count” floating around the organization and having one authoritative definition that everyone trusts.

Data Mesh Meets Reality

The data mesh concept sounds appealing — let each domain own its data while maintaining some overall coherence. In practice, though, it’s trickier than the conference presentations make it seem. Domain experts often have deep business knowledge but limited experience with data governance protocols. Meanwhile, central IT teams understand the technical requirements but may miss nuanced business rules.

Most organizations end up with hybrid approaches that borrow the best ideas from data mesh while keeping enough central oversight to prevent chaos. Domain teams get autonomy over their data definitions and quality standards, but they follow enterprise-wide frameworks for security, privacy, and integration patterns.

The Centralized vs. Federated Dilemma

Every data leader faces this choice: lock everything down centrally for consistency, or federate ownership and accept some messiness in exchange for speed and domain expertise. Neither extreme works particularly well.

Centralized approaches often create bottlenecks where business teams wait weeks for simple data requests. Fully federated models can devolve into data anarchy, where nobody’s quite sure which numbers to trust. The organizations that get this right typically centralize governance policies and standards while federating the actual data management and stewardship responsibilities.

What emerges from these architectural patterns — when they’re implemented thoughtfully rather than copied from vendor presentations — are data operations that can scale. They handle growth without breaking, support new analytical requirements without massive re-engineering projects, and somehow maintain enough agility to adapt when business needs shift unexpectedly.

Industry frameworks from companies like Tableau and IBM provide helpful starting points. But the real success comes from adapting these patterns to the specific messiness of each organization’s data landscape, legacy systems, and cultural dynamics.

The Role of AI in Enterprise Data Strategy

AI has become the shiny object that every executive wants to talk about in quarterly reviews. But beneath the hype, there’s a fundamental shift happening in how organizations need to think about their data infrastructure. The old approach of “build it and they will come” doesn’t work when AI systems are involved — they’re pickier about data quality than the most demanding analyst.

Most enterprises find themselves preparing for three distinct flavors of AI deployment, each with its own quirks and requirements. Semantic layers play a crucial role in enterprise AI initiatives, though not always in the ways vendors initially promised.

Generative AI: The Data Glutton

Large language models and conversational BI applications are hungry for clean, well-governed data. They’ll happily ingest messy information, but the results can be spectacularly wrong in ways that traditional analytics never managed. RAG pipelines, in particular, have a knack for finding the one outdated document in the knowledge base and treating it as gospel.

The challenge isn’t just feeding these systems data — it’s ensuring they can access enterprise information through natural language interfaces without hallucinating results or exposing sensitive information to users who shouldn’t see it. Most organizations discover this the hard way during pilot projects.

Predictive Analytics: Still the Workhorse

While everyone’s excited about generative AI, predictive analytics continues handling the heavy lifting in most enterprises. These systems need historical data that tells a coherent story over time, plus real-time feeds that don’t break when something upstream changes its schema.

The forecasting models, risk assessment systems, and operational optimization algorithms that drive business decisions depend on data pipelines that stay consistent even when business conditions shift unexpectedly. This reliability requirement often conflicts with the rapid iteration cycles that AI teams prefer.

Agentic AI: The New Frontier

Autonomous decision-making systems represent the most demanding category for data architecture. These systems need semantic context and structured relationships between data elements to operate with minimal human oversight. The accountability requirements alone force organizations to rethink how they document data lineage and business rules.

Getting agentic AI right requires unprecedented levels of data quality and explainability. Most organizations aren’t remotely ready for this, despite what their vendors might suggest during sales presentations.

The Foundational Elements

AI initiative success depends on three unglamorous but essential building blocks that don’t get much attention in conference keynotes:

- Clean, governed, bias-free data that meets quality standards that are reliable enough for automated decision-making. This sounds obvious until teams start measuring their current data quality and realize how much work lies ahead.

- Unified semantic layers that maintain consistency and explainability across AI applications. Without this consistency, different AI systems produce conflicting insights about the same business questions, which erodes trust faster than almost anything else.

- Scalable architectures that handle real-time streaming data alongside massive unstructured datasets. The technical requirements here often conflict with existing enterprise architecture standards, creating tension between innovation and operational stability.

Enterprise Data Strategy Examples

Rather than abstract frameworks, looking at how different industries implement data strategies reveals the messy reality of turning concepts into operational systems.

Retail: The Inventory Juggling Act

Retail organizations face the brutal reality of demand forecasting, where being wrong costs money immediately. Their unified inventory systems combine point-of-sale data, supply chain information, and external market signals, but the devil lives in the integration details.

Most retailers discover that “unified” is easier to say than achieve. Point-of-sale systems capture transactions differently across store formats, supply chain data arrives in batches with varying delays, and external market signals often contradict each other. The successful implementations focus less on perfect data and more on systems that function reliably with imperfect information while optimizing stock levels and reducing waste.

Financial Services: Fighting Fraud Without Annoying Customers

AI-powered fraud detection in financial services walks a tightrope between security and customer experience. These systems analyze transaction patterns, customer behavior, and external risk signals in real-time, but they’re only as good as their false positive rates.

The challenge isn’t identifying obviously fraudulent transactions — it’s catching sophisticated fraud without flagging legitimate customers who happen to behave unusually. This requires combining multiple data sources with nuanced business rules that evolve as fraud patterns change. Most financial institutions spend as much time tuning these systems as they do building them initially.

Healthcare: Privacy Meets Analytics

Healthcare providers building HIPAA-compliant patient analytics platforms face regulatory constraints that would make other industries weep. Integrating electronic health records, diagnostic imaging, and treatment outcomes while maintaining strict privacy controls requires architectural decisions that often prioritize compliance over analytical convenience.

The successful implementations typically involve more data engineering effort than anyone initially budgets for, plus ongoing compliance monitoring that adds operational overhead. But when these systems work, they genuinely improve patient care in measurable ways.

Technology Companies: Democratization Dreams

Technology companies developing self-service business intelligence platforms chase the goal of democratizing data access across engineering, marketing, and sales teams. The reality involves more governance than the “self-service” label suggests.

These platforms succeed when they balance ease of use with enough guardrails to prevent teams from drawing incorrect conclusions from incomplete data. Most discover that true self-service requires significant upfront investment in data preparation and semantic modeling — the opposite of the quick wins that business stakeholders typically expect.

Roadmap: Steps to Building an Effective Enterprise Data Strategy

Building a data strategy that works requires navigating the gap between executive expectations and operational reality. Most organizations start with ambitious goals and discover that data transformation takes longer, costs more, and involves more organizational politics than anyone anticipated.

The systematic approach that tends to survive contact with reality looks something like this:

1. Define business outcomes (and get specific about them)

This step sounds deceptively simple until teams try to nail down what “data-driven decision making” means for their organization. The conversations get interesting when executives realize they need to commit to specific, measurable objectives that data initiatives will either support or fail to deliver.

Smart organizations bypass generic goals like “improve analytics” and focus on concrete targets: reduce customer churn by 15%, cut operational costs in specific departments, or accelerate time-to-insight for quarterly planning. These specific commitments force alignment between technical capabilities and business value from day one.

2. Inventory what already exists (prepare for surprises)

The data discovery phase regularly produces revelations that change entire project scopes. Organizations think they know what data they have until they start cataloging current systems and evaluating actual data quality, accessibility, and governance gaps.

Shadow spreadsheets emerge from departmental corners. Critical business processes turn out to depend on systems that nobody in IT knew existed. Data quality that looked acceptable in small samples completely fails at scale. These discoveries are uncomfortable but essential for realistic planning.

3. Establish governance without killing innovation

Creating frameworks that balance data accessibility with security requirements sounds straightforward until teams encounter the inevitable tension between enabling innovation and meeting regulatory obligations. Most organizations swing too far in one direction initially.

Overly restrictive governance kills momentum and drives business users back to their spreadsheets. Insufficient governance creates compliance risks and data quality problems that compound over time. The sweet spot requires iteration and ongoing adjustment based on what happens when people use the systems.

4. Build architecture for today and tomorrow

Target-state architecture planning involves predicting future needs based on current requirements — a process that humbles even experienced architects. Modern approaches using semantic layers, cloud-native platforms, and API-driven integration patterns provide flexibility, but they also introduce complexity.

The most successful implementations start with pilot use cases that prove the architecture can handle both current needs and likely future AI initiatives, then scale based on what works rather than what looked good in the vendor demos.

5. Launch pilots that teach real lessons

Selecting high-impact, low-risk use cases for pilot projects requires balancing competing priorities that often conflict. High-impact projects typically involve complex data integration challenges. Low-risk projects may not demonstrate enough value to build organizational confidence and expertise.

The pilots that succeed focus on showcasing the potential of the data strategy while generating genuine business value and revealing operational challenges that need addressing before broader rollout.

6. Scale what works

Operationalizing successful pilot projects across the enterprise involves different challenges than the initial implementation. Systems that worked well with pilot data volumes may struggle at enterprise scale. Governance processes that seemed reasonable for small teams become bottlenecks when applied organization-wide.

Building the organizational capabilities needed for enterprise-wide adoption often requires more change management effort than the technical implementation itself.

7. Measure, learn, and adjust

Continuous optimization using defined KPIs, regular assessment cycles, and feedback loops sounds like standard project management until teams try to measure the business impact of data strategy initiatives. Traditional ROI calculations often miss the indirect benefits and long-term capabilities that data investments create.

Organizations that get this right develop metrics that capture both immediate business impact and strategic positioning for future opportunities, then use those insights to adapt the strategy as business needs and technological advances evolve.

This approach draws from methodologies like IBM’s six-step model but acknowledges that organizational realities rarely perfectly match vendor frameworks. The goal is to build sustainable data capabilities that evolve with business requirements rather than implementing someone else’s best practices unchanged.

Accelerating AI and Advanced Analytics Enablement

The promise of AI-powered analytics drives much of the current investment in data infrastructure, but the reality involves more mundane data preparation work than most organizations expect. Getting AI systems to produce reliable insights requires solving fundamental data problems that have been lurking in enterprise systems for years.

Structured and Unstructured Data: The Integration Challenge

Traditional relational data represents only part of the information landscape that AI systems need for accurate insights. Text documents, images, video files, sensor data, and social media feeds all contain business-relevant information, but integrating these diverse data types into unified analytical workflows remains surprisingly difficult.

Most organizations underestimate the effort required to create consistent processing pipelines that handle both structured database records and unstructured content. The technical integration challenges pale compared to the governance questions: who owns external social media data, how long should sensor readings be retained, and what privacy controls apply to document analysis?

Success in this area typically involves accepting that perfect integration may not be achievable initially, then building systems that function reliably with the data sources that are accessible and well-governed while gradually expanding coverage.

Semantic Consistency: Harder Than It Looks

Inconsistent data definitions create problems that multiply when AI systems get involved. Traditional analytics might produce slightly different revenue numbers depending on which data source someone uses, but AI systems can generate completely contradictory strategic recommendations based on the same semantic inconsistencies.

Semantic layers provide the foundational consistency for agentic AI deployment across multiple use cases and business domains, but implementing them requires more organizational coordination than technical sophistication. Getting different departments to agree on standard definitions for concepts like “customer,” “revenue,” or “active user” involves politics as much as technology.

Organizations that succeed in creating reliable AI deployment typically invest heavily in the tedious work of data standardization and semantic modeling before launching exciting AI pilot projects. The payoff comes when AI systems produce consistent, trustworthy results that business users can rely on for important decisions.

Practical Implementation Reality

Companies leveraging semantic layer technologies can accelerate their AI initiatives significantly, but not always in the ways their initial plans anticipated. The most valuable outcomes often emerge from improved data consistency and business-friendly representations that both technical teams and business users can trust and understand.

The acceleration happens when AI systems can access reliable data definitions without requiring extensive custom integration work for each new use case. This reliability becomes especially important as organizations move beyond pilot projects to production AI systems that need to operate consistently across different business domains.

Common Challenges and How to Overcome Them

Data strategy implementations encounter predictable obstacles that seem obvious in retrospect but somehow catch most organizations unprepared. Understanding these patterns can help teams prepare for challenges before they become project-threatening problems.

Data Silos: The Persistent Reality

Departmental autonomy and legacy system architectures create data silos that resist technical solutions. Teams can build elegant integration platforms, but if sales and marketing departments prefer their existing tools and processes, the integration often goes unused.

Recent MIT research reveals that 95% of organizations are getting zero return from their GenAI investments, with most failures stemming from static tools that can’t adapt to actual workflows rather than technical limitations. This finding applies to data integration challenges broadly — success depends as much on organizational adoption as technical capability.

Breaking down silos requires addressing both technical barriers and human incentives. The most effective approaches typically involve demonstrating clear value to individual departments before requiring them to change their established processes.

Executive Sponsorship: Essential but Fragile

Well-designed data strategies fail regularly due to insufficient executive support. However, maintaining that support requires delivering measurable results on timelines that often conflict with data project realities. Executives want to see ROI within quarters, while data infrastructure investments typically pay off over years.

The organizations that sustain executive sponsorship focus on demonstrating ROI through pilot projects that deliver immediate business value, connecting data initiatives to specific business outcomes that executives care about, and maintaining regular communication about measurable value delivered rather than technical progress achieved.

This approach requires project planning that balances long-term infrastructure needs with short-term wins that keep executive attention and funding.

Data Quality: The Never-Ending Story

Poor quality and dark data issues stem from inadequate governance and monitoring, but solving these problems requires ongoing operational investment that never shows up cleanly in project budgets. Organizations often treat data quality as a one-time cleanup effort rather than an ongoing operational requirement.

Sustainable approaches involve automated data quality monitoring systems, clear data ownership responsibilities distributed across business units, and incentive structures that reward data stewardship behavior rather than just punishing data quality problems after they occur.

Skills and Change Management

Skills gaps and resistance to change require comprehensive approaches that extend beyond traditional training programs. To change organizational cultures, companies need to consistently sustain data literacy initiatives, clear communication about benefits, and recognition programs that reward data-driven decision-making.

The most successful change management approaches focus on making data tools genuinely useful for existing work rather than requiring individuals to adopt new processes for the sake of the data strategy.

Governance Complexity

Organizations can manage governance and compliance requirements through automated policy enforcement, clear escalation procedures, and regular compliance auditing that are integrated into daily workflows. But these systems need ongoing maintenance and adjustment as regulations and business requirements evolve.

Organizations that handle this well treat governance as an operational capability requiring dedicated resources, not a “one and done” set of policies.

Future Trends in Enterprise Data Strategy

The next few years will bring major shifts to data strategy. However, nobody can pin down exactly when or how these changes will hit given the pace of technological change..

AI Democratization: Promise and Peril

Natural language interfaces, automated machine learning, and self-service AI platforms promise to extend advanced analytics capabilities to non-technical users. The reality will likely involve more complexity than the vendor presentations suggest.

These tools require robust governance frameworks to prevent non-technical users from drawing incorrect conclusions from incomplete data or generating AI outputs that violate privacy regulations. Most organizations will need to invest significantly in user education and system guardrails to make AI democratization work safely.

Data as Product: Beyond Internal Use

Treating data as strategic assets with defined service level agreements, pricing models, and external market opportunities represents a fundamental shift in how organizations think about information value. Some companies will discover new revenue streams through data monetization, while others will find that their data isn’t as valuable externally as they assumed.

The organizations that succeed in data productization typically start by improving internal data operations before exploring external opportunities.

Autonomous Systems: The Next Frontier

Agentic AI systems that make complex decisions with minimal human intervention will require unprecedented levels of data quality, consistency, and explainability. Most current data infrastructures aren’t ready for these requirements.

Organizations preparing for autonomous systems need to invest in data lineage tracking, decision audit capabilities, and explainable AI frameworks well before deploying autonomous decision-making systems in production environments.

Real-time Everything

Dynamic policy enforcement, automated compliance monitoring, and adaptive security measures that respond to changing risk profiles without hindering business agility represent the next evolution in data governance. These capabilities will require new architectural approaches and operational processes.

Semantic-driven Automation

Artificial intelligence systems that automatically classify, catalog, and govern data based on business meaning rather than technical structure could dramatically reduce manual effort while improving accuracy. However, these systems will need to understand organizational context and business rules that vary significantly across industries and companies.

The organizations that benefit most from these trends will likely be those that have invested in solid data fundamentals before trying to implement the latest innovations.

Enterprise Data Strategy at a Glance

• Enterprise data strategy serves as a comprehensive roadmap aligning data, people, technology, and governance to drive business outcomes and AI readiness

• Core pillars include governance & compliance, architecture & integration, data quality & trust, AI/analytics enablement, culture & change management, and continuous improvement

• AI readiness requires clean, governed data; unified semantic layers; and scalable architectures supporting generative AI, predictive analytics, and autonomous systems

• Implementation success follows a systematic approach: define outcomes, assess current state, establish governance, build architecture, pilot projects, scale operations, and iterate continuously

• Modern architectures leverage semantic layers, data mesh patterns, and federated governance to balance centralized control with distributed ownership and innovation

Transform Your Enterprise Data Strategy with AtScale

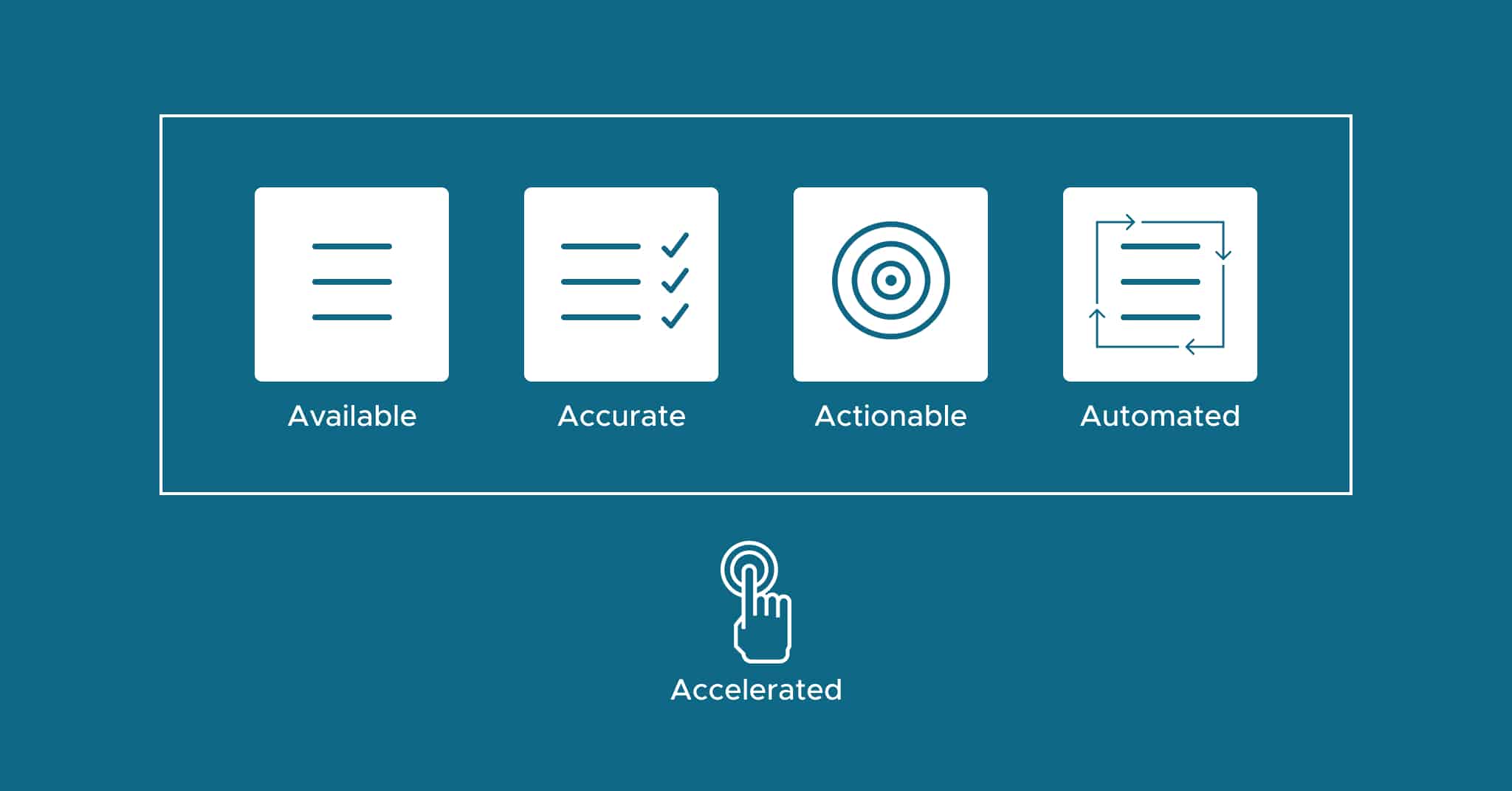

As organizations grapple with increasing data complexity and AI implementation challenges, the gap between data potential and business outcomes continues to widen. Analytics leaders need solutions that can deliver the five A’s of data — available, accurate, actionable, automated, and accelerated — without requiring extensive technical resources or lengthy implementation cycles.

The AtScale semantic layer platform addresses these challenges by providing a unified data foundation that connects cloud data platforms directly to business intelligence tools like Tableau, Power BI, and Excel. Data engineers can establish governed, reusable metrics once, while business users gain self-service access to consistent, high-performance analytics across the enterprise. This approach eliminates the traditional bottlenecks of manual data preparation, reduces cloud compute costs through intelligent query optimization, and ensures that organizations build AI initiatives on trusted, semantically consistent data.

Ready to accelerate your enterprise data strategy? Request a demo to see how AtScale can transform your organization’s path from data to insights.

Frequently Asked Questions

An enterprise data strategy is a comprehensive plan that defines how an organization manages, governs, and uses its data to achieve business objectives and enable AI-driven insights. It serves as a roadmap connecting data assets to measurable business outcomes while ensuring governance and scalability.

With 80% of data strategies expected to fail without strong governance, a comprehensive strategy is essential for competing effectively, meeting compliance obligations, and enabling AI innovation while maintaining data trust and operational efficiency.

The six core pillars are: data governance and compliance, architecture and integration (including semantic layers), data quality and trust, AI/analytics enablement, culture and change management, and measurement and continuous improvement frameworks.

Data maturity assessment involves evaluating data architecture readiness for AI, auditing system integrations and silos, measuring current analytics capabilities, identifying dark data (approximately 90% of enterprise data goes unanalyzed), and assessing cultural readiness and leadership alignment.

1) Define business outcomes

2) Inventory and assess existing data assets

3) Establish governance and compliance policies

4) Build target-state architecture

5) Launch pilot projects

6) Operationalize success at scale

7) Measure and iterate continuously

A robust data strategy supports AI by creating three essential foundations:

1) Clean, trustworthy data for ML models

2) Unified semantic layers that provide consistent definitions across all systems so different AI tools produce aligned results

3) Scalable infrastructure that handles everything from chatbots to predictive analytics without breaking

SHARE

2026 State of the Semantic Layer