Turning raw data into analysis-ready data sets for Business Intelligence (BI) and analytics teams is a challenge for many organizations. While collecting and storing information is easier than ever, delivering data sets that are fully prepped for analysts and decision makers to interact with can be difficult. The complexity is not in the transformation of data – it is in establishing an organizing principle that is universally understood by all data consumers. With so much information floating around organizations in various silos, it can be difficult to determine what the data really is, let alone what it means.

The purpose of a semantic layer for data and analytics is to let organizations translate raw data and into a single source of truth that includes common enterprise metrics (like revenue, cost, and quantities) as well as standardized dimensions to sort, group, and categorize around clear, defined concepts (like time periods, geographic locations, and products). Dimensional modeling was popularized over 25 years ago in Ralph Kimball’s seminal book The Data Warehouse Toolkit.

While the world of enterprise data has been transformed by cloud data platforms, modern web-based BI, and data science platforms, the basic concept of dimensional modeling remains highly relevant and offers a powerful paradigm for delivering analysis-ready data to data consumers across the enterprise.In this post, we explore how organizations can make their raw data analysis-ready with dimensional modeling to accelerate time to insight and support data-driven decision making.

The Raw Data Problem

Organizations have no shortage of raw data; in fact, they’re inundated with it.

Unfortunately, this data is often trapped within silos; physical silos of different cloud platforms, schema silos of different data formats and structures, and semantic silos of different definitions and descriptions of data. Raw data trapped within silos is devoid of meaningful context. Without an approach to integrating data and making it analysis-ready, different people, using different tools, could all draw different conclusions from the same data. Or even more likely, many data consumers won’t even know where to start.

For example, if a business operations team is making investment decisions using Salesforce.com data alone, they may lack the context of lead source data available in Google Analytics. Not only are the raw data sets separated into different SaaS application clouds, they have different data schemas and different geographical segmentations. Even if the raw data is extracted and loaded to a common cloud data lake or cloud data warehouse, the schema and semantic silos remain. The AtScale semantic layer platform delivers a flexible approach to making this data analysis-ready, within a well defined, business-oriented dimensional model understandable by different analysts and decision makers.

This simple example can be extrapolated to the more complex data reality of the modern enterprise.

The Power of Defining a Dimensional Model Within a Semantic Layer

A semantic layer is the natural place to apply dimensional modeling in order to expose analysis-ready data sets to data consumers. Dimensional modeling forms a set of “guard rails” for self-service BI. Dimensional models standardize critical business concepts like “time”, “location”, or “SKU” to provide filtering, grouping and categorization in an intuitive way for data consumers to interact with.

Business users tend to think of data in dimensional terms, so this construct makes raw data easier to understand and use. Delivered within a semantic layer, dimensions make it easier for data consumers to parse through reams of data quickly to uncover previously hidden patterns and relationships. A semantic layer empowered with a dimensional model delivers a single view of multiple data sources and organizes data in an intuitive way to reveal insights from new angles.

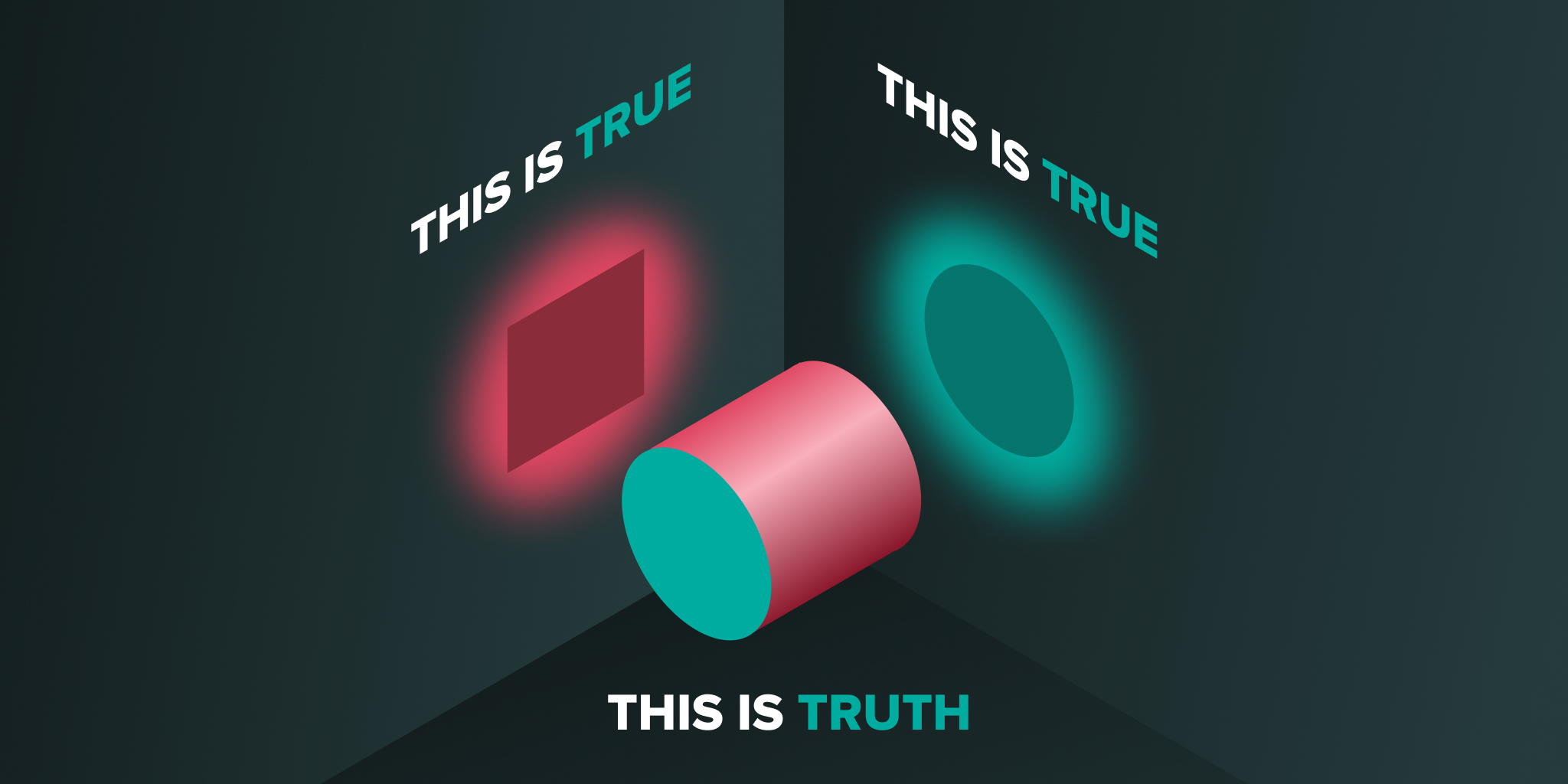

Without a dimensional view of your data, your organization runs the risk of seeing only one side of a multifaceted problem – drawing incomplete or incorrect conclusions and spending time reconciling conflicting views of the truth. No matter how you look at it, this inefficiency makes it more difficult for decision makers to be truly data-driven.

How Dimensional Modeling is Fundamental to Self-Service BI

Dimensional models delivered through a semantic layer turn raw data into analysis-ready data sets. Data consumers are able to slice and dice data using business concepts like time, region, product, and price. And, with intuitive data analysis tools, they can do so without needing deep technical expertise to know the details of how to map or join raw data. With dimensional hierarchies, data consumers can further categorize and summarize data. For example, a product dimension with a hierarchy allows users to roll up product SKUs (the most granular view of product) into product families, product categories, and other groupings to make finding trends easier and faster versus querying data at its most granular level (SKU).

Even better, by combining multiple dimensions (or hierarchies) in a single query, users can quickly uncover hidden trends. For example, a sporting goods retailer may analyze product, time and region dimensions together, identifying the insight that a particular product category (i.e. sleds) underperformed in a particular region (i.e. west coast) due to a spell of unfavorable weather (i.e no snow) over a few weeks. Without the ability to break down data into dimensions, this localized (time and region) trend would have been hard, if not impossible, to uncover. Further, the ability for an analyst to perform sophisticated analysis without the support of data engineers supports the vision of self service BI.

There are other benefits to dimensional modeling within a semantic layer like AtScale including performance optimization and simplification of data pipelines. These will be subjects for future blogs.

To learn more about how to get the most from augmented analytics, including how to apply dimensionality to turn raw data into BI, you can watch our free webinar here.

SHARE

2026 State of the Semantic Layer