Patient documentation has come a long way since the days of doctors writing patient notes with pen and paper. Today, Electronic Medical Record (EMR) systems can capture, exchange, and store medical records automatically. These advances have been significant for providers and patients alike and the availability of information dramatically enhances patient outcomes.

With the world of EMR technology still relatively nascent, there are some critical shortfalls that, if addressed, could significantly improve patient care as well as medical research at a time where both of those things have never been more important.

The Narrative Problem

If there is one thing limiting current EMR systems, it’s the human capacity to analyze the sheer number of medical records that might be required to make the best treatment decisions for a patient. The root of this challenge lies in the fact that much of the data that make up a medical record is narrative or unstructured. For most EMR systems (and the doctors and nurses using them), this means manually reading the text entered by providers to inform treatment decisions. For complex cases, dozens, hundreds, or even thousands of records might be relevant to a patient’s situation.

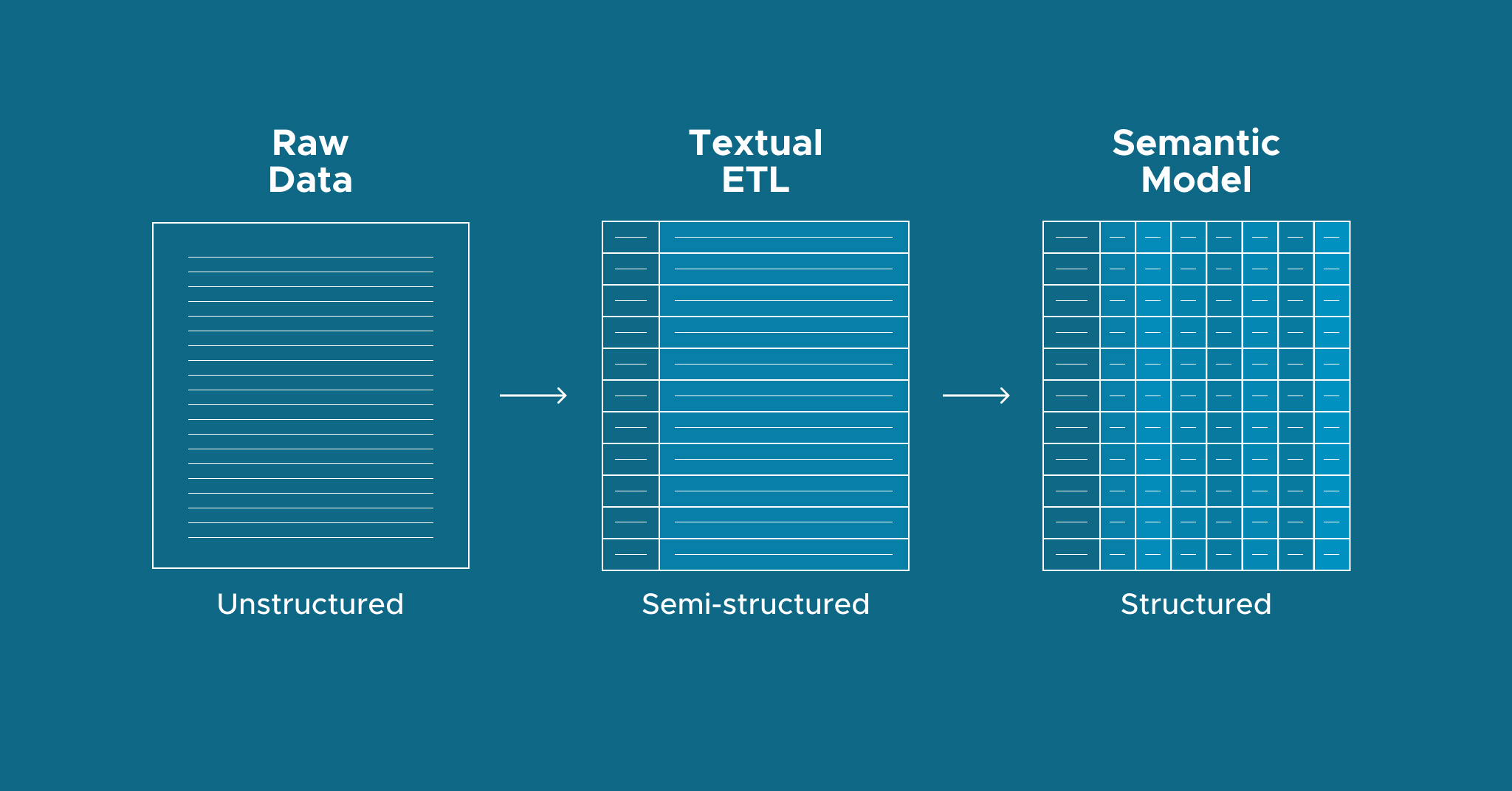

When it comes to medical research, the number of relevant records with narrative text could number millions or billions. Most industries solve the “preponderance of data” problem by leveraging data science and analytics. But what about sectors that capture a lot of narrative data in their records like our EMR use case? Solving this problem requires structuring narrative text in patient records in a way that is consumable by computer programs and data tools. So how do we get from text-narrative into a structured format? That’s where Textual ETL comes in.

Textual ETL

Textual ETL is a new technology pioneered by Bill Inmon in his book, The Textual Warehouse, that moves text from a narrative format to a database format. This is a game-changer for the EMR use case and many others. We go from manually analyzing dozens of records to inform decisions to data science applications analyzing millions of documents at once.

Textual ETL is particularly powerful for medical research applications because of how limited traditional EMR systems are in that capacity. The prolific use of narration in virtually every record makes the automation of analytics capabilities impossible. Textual ETL solves this problem by iterating through the narrative, identifying what’s essential, and creating an organized, relational, computer-friendly version of the notes called Disambiguated Text. Once this completes, the data can be analyzed, starting with the semantic layer.

What is a Semantic Layer?

A semantic layer presents a consistent set of business metrics for business intelligence (BI) and data science teams to consume information from within the tools of their choice. It also establishes an integration layer that enables analytics discoverability, data governance, and robust security measures. Finally, the semantic layer delivers massive performance benefits, accelerating end-to-end query performance and optimizing resource usage in cloud implementations.

Analyzing Doctor Notes with ETL + Semantic Layer

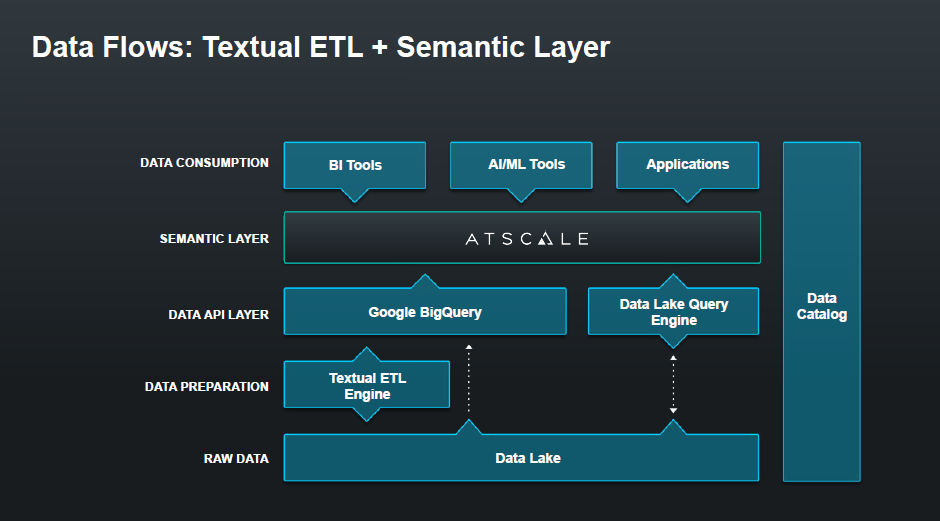

For our EMR use case, we can observe data flow starting with raw data from simple files in a data lake and passing through the Textual ETL Engine.

Data is then encoded into the semantic layer and stored in a Google BigQuery data warehouse. From there, data can be consumed by virtually any BI tool, AI/ML tool or custom application.

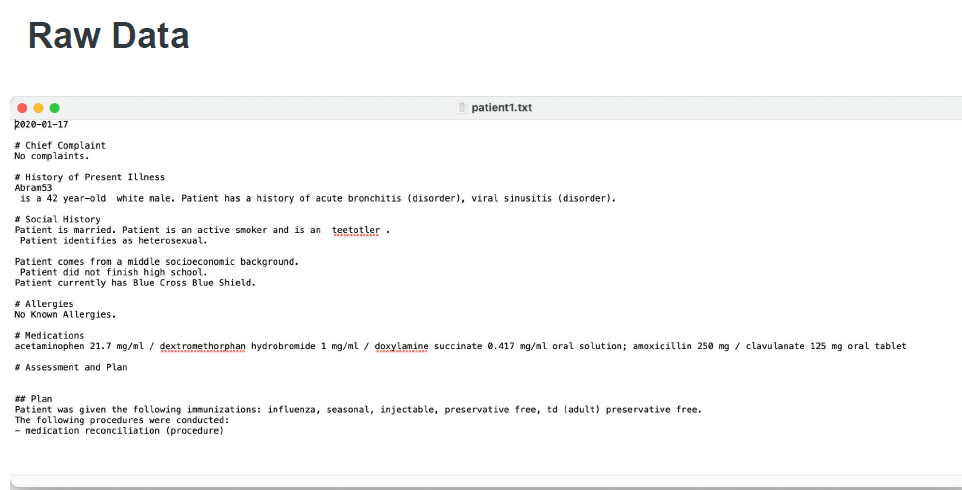

Here is a look at the raw narrative text before the Textual ETL Engine processes it:

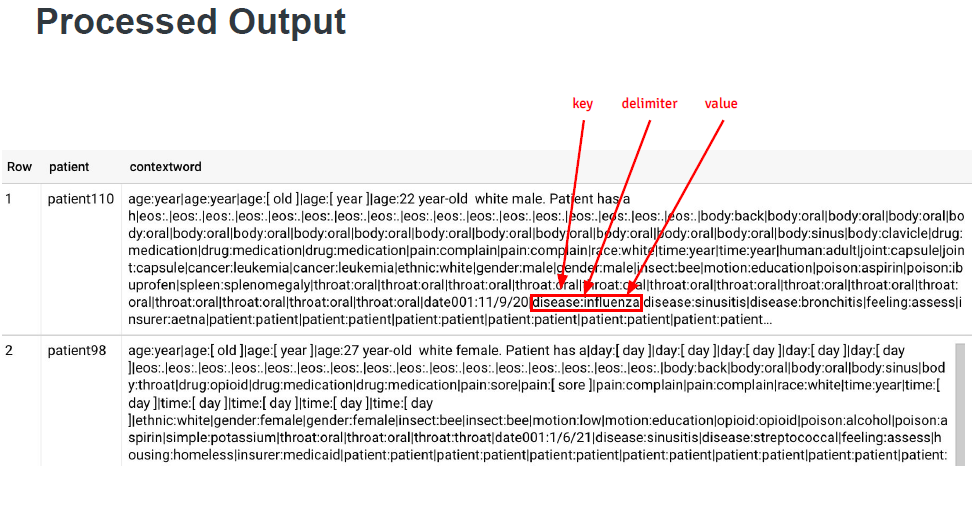

This is the processed output:

As you can see, the data is semi-structured and, while more organized than free text, is still challenging to consume for data science and analytical purposes. That’s where the semantic layer comes into play.

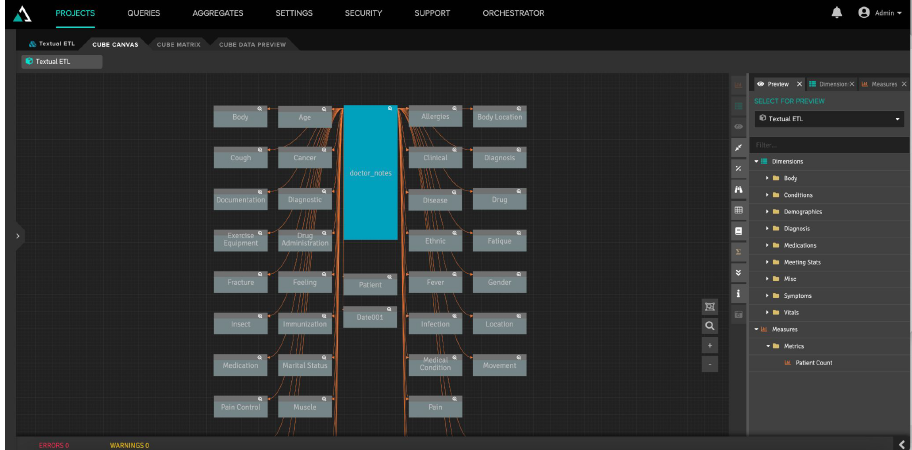

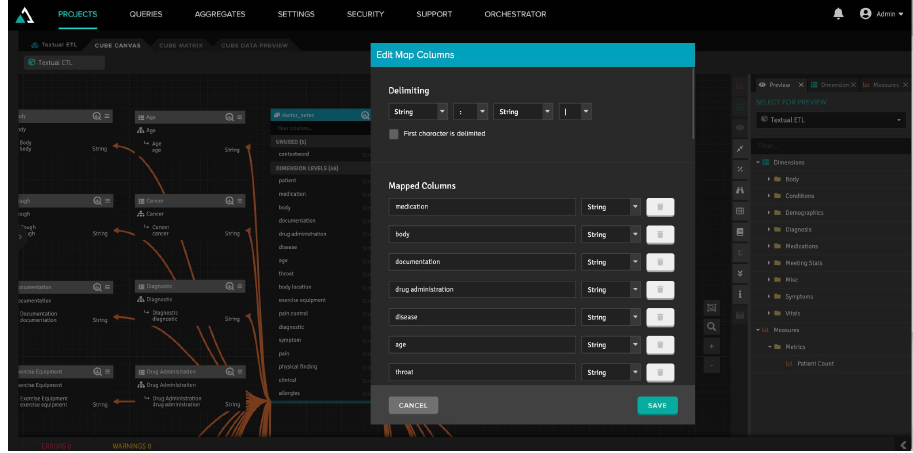

Here is a view of the Semantic Model for a doctor’s note. The grey boxes depicted represent the categories derived from the Textual ETL analysis of the narrative text.

Part of what makes this so powerful is that the tool created both the data model and the data dimensions based on the delimiting structure of the textual ETL output. Not only does this save the time and painstaking effort required to create a data model from scratch, but it does it by starting with a simple blob of free narrative text and can adapt to new data automatically.

Watch the full demonstration featuring a presentation from Bill Inmon, the Father of the Data Warehouse, here.

Use AtScale to Transform Unstructured data Into Actionable Insights

One of the biggest challenges in analytics is leveraging the data generated by business processes “as-is” and transforming it into actionable and insightful information to support decisions. The combination of Textual ETL and AtScale’s Semantic Layer does just that. And it isn’t limited to the EMR use case. Many industries can benefit from transforming unstructured text into structured data consumable by any BI tool. To find out more, request a demo.

SHARE

2026 State of the Semantic Layer