*This post was originally published by the author, Anurag Singh. You can view the original post here.

Making data accessible to everyone within an organization is a challenge that most companies face. For example, data scientists generate forecasts and predictions while business analysts manage and drive revenue.

That means there is a need for a unified fabric that joins together business and data science teams. It should do that in a user-friendly and business-friendly way by following a consistent set of business metrics that allows both parties to use the tools they already know, meaning there’s no learning curve involved. It should also provide IT with a single source of truth and ability to govern data across the organization.

What is a Semantic Layer

A semantic layer is a business representation of corporate data that helps end-users access data autonomously using common business terms. A semantic layer maps complex data into familiar business terms such as product, customer, or revenue to offer a unified and consolidated view of data across the organization.

AtScale is a semantic layer platform which maps complex data into familiar business terms for data science and business intelligence programs built on the cloud.

AtScale Features:

- Presents a consistent set of business metrics for business intelligence and data science teams to consume using the tools of their choice.

- Establishes an integration layer within enterprise data fabric to support analytics discoverability, governance and security.

- Accelerates end-to-end query performance while optimizing cloud resources.

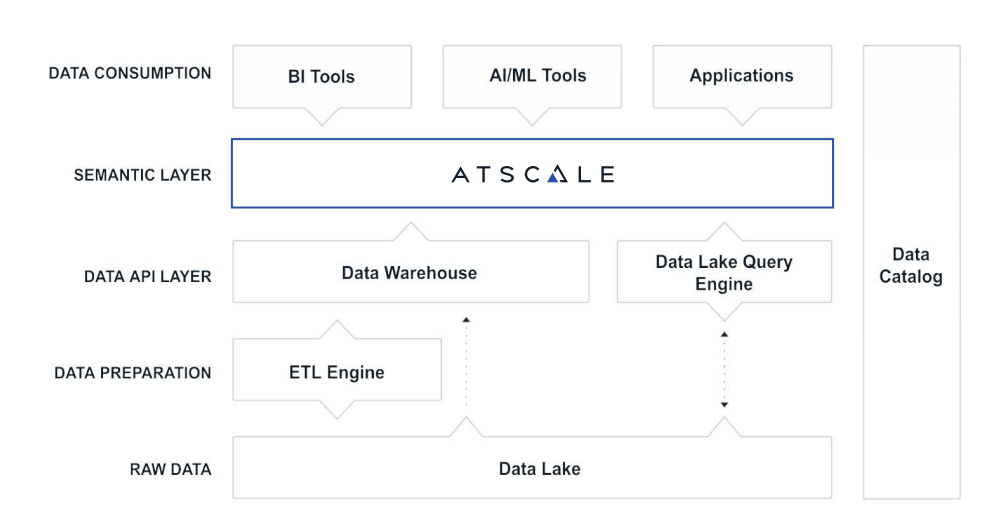

So where does a semantic layer sit in your data analytics stack?

Let’s take a closer look at the architecture and how AtScale achieves this.

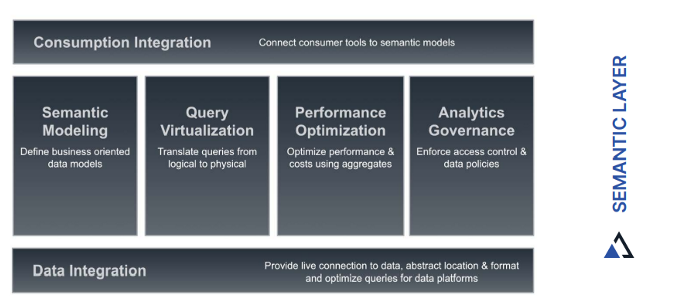

The answer lies in the way the components are designed to function.

- Consumption Integration – Anyone using Excel, Power BI, Looker or Tableau can connect to AtScale and run queries immediately. Application and integration-friendly via REST, JDBC, ODBC, MDX and DAX.

- Semantic Modeling – AtScale’s semantic model unifies semantic definitions and metrics for data and makes it available in one location for BI, AI/ML and applications. It works with data stored anywhere, whether it’s in a data lake or a data warehouse.

- Query Virtualization – AtScale’s data virtualization automates the sourcing, curation and modeling of data on-premises or in the cloud. It blends live data from multiple data sources into virtual, logical views. Virtualization makes IT more agile with the ability to store data in the most suitable platforms while providing the flexibility to adopt new platforms in the future.

- Performance Optimization- AtScale’s autonomous performance optimization technology identifies query patterns to create and manage intelligent aggregates, just like the data engineering team would do. The AI-driven optimization engine learns from user behavior and data relationships, managing data updates and changes.

- Analytics Governance – AtScale provides enterprise directory integration (AD/Octa/OAuth), role-based access controls, object level control for users/groups and column masking in query tools.

Data Integration – AtScale speaks to data lakes and data warehouses with data platform optimized SQL so performance is as fast or faster than hand-written queries. Rather than processing data locally, the AtScale engine pushes queries down to the underlying data platform to eliminate data movement and scale performance along with the data platform, without the need of managing a separate analytics infrastructure.

AtScale Query Engine – The AtScale query engine acts as a query interface for business intelligence, AI/ML tools and applications. Consumption tools can connect to AtScale via one of the following protocols:

- For tools that speak SQL, the AtScale engine appears as a Hive SQL warehouse.

- For tools that speak MDX or DAX, AtScale appears as a SQL Server Analysis Services (SSAS) cube.

- For applications that speak REST or Python, AtScale appears as a web service.

SHARE

2026 State of the Semantic Layer