Late last year, Gartner published their first Market Guide for Analytics Query Accelerators (available with Gartner Subscription). They loosely define this broad set of technologies as providing “[query] optimization on top of semantically flexible data stores, typically associated with data lake architectures.” AtScale was included as a representative vendor under the “Virtualization” category. Similarly to how we have discussed our relationship to Data Virtualization technologies in a prior post, while AtScale does not consider our semantic layer platform to be a query accelerator, it is worthwhile to explore the fundamentals of modern analytics query acceleration and how we fit it.

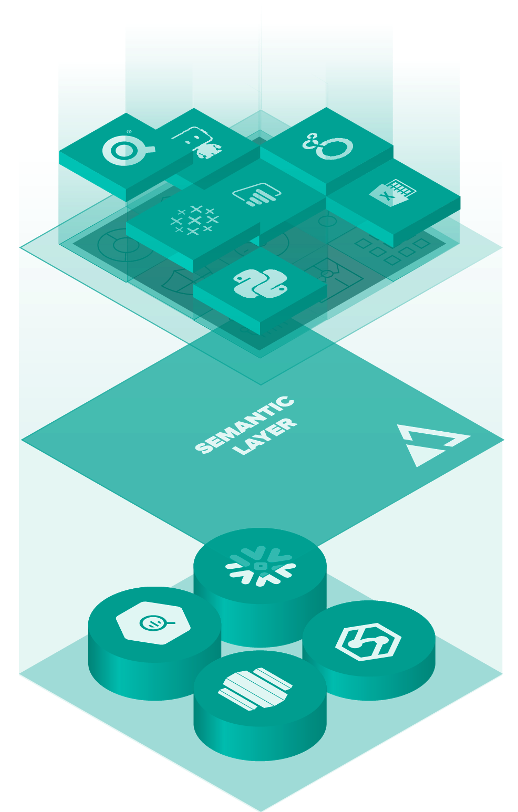

With business intelligence (BI) and data science driving a large portion of new workloads migrating to cloud data platforms (including both cloud data warehouses and cloud data lakes), maintaining performant queries while optimizing cloud costs is top of mind for modern analytics architects. AtScale leverages a unique combination of data virtualization, semantic modeling, and automated data engineering to help analytics teams get the most from their cloud investments. This post will explore the basics of analytics query acceleration and how AtScale complements a best-in-class deployment of modern BI solutions (like PowerBI, Excel, Tableau, Looker, etc.) on a cloud data warehouse (like Snowflake, Google BigQuery, Amazon Redshift, or Microsoft Azure Synapse) or a data lake platform (like Databricks or Amazon S3).

Performant Queries on Live Cloud Data

Since the dawn of analytics, data engineers have looked at physical transformation and data movement as an approach to accelerating queries. Bringing subsets of data into cache or even local desktops definitely accelerates queries against that reduced data set. Conventional OLAP approaches like Microsoft SQL Server Analysis Services (SSAS) transform relational data into “cubes” that contain a pre-calculated data format for lightning-fast query performance. These approaches that involve “ingesting” data break the connection to live cloud data –requiring data engineers to manage data updates and data consumers to work through data sync issues. Furthermore, local storage and in-memory resources are expensive and limited in their ability to elastically scale. In practice, ingest-based performance acceleration strategies do not scale sufficiently to support cloud scale businesses.

Understanding Materialized Views

Cloud data platform providers have all been introducing different implementations of an approach known as “Materialized Views.” The concept relates to creating and maintaining a new physical, aggregated or abridged version of the data that is logically linked to its source data for automated refresh. While data is physically aggregated or abridged, it remains in the cloud data platform with no practical limits on size. Materialized views are an important concept in query acceleration, and as written in the past, AtScale’s Acceleration Structures are mostly equivalent. The challenge to realizing the potential of materialized views is in defining, creating, and maintaining views with the responsiveness that rapidly growing analytics programs demand.

AtScale Acceleration Structures vs. Materialized Views

AtScale’s platform brings semantic understanding and artificial intelligence to building powerful views of data (we call these acceleration structures) that are continually, automatically optimized. The result is a dynamic analytics query experience that is responsive to query consumption patterns and cloud resource availability.

We use the term autonomous data engineering to describe the capability to create AtScale acceleration structures. The approach leverages four important sources of information to dynamically create and maintain these structures:

- Data relationships. AtScale maintains a logical model that defines the business rules and semantic meaning of source data fields. By understanding data relationships and how data can and cannot be aggregated, AtScale ensures that aggregations preserve the fidelity of the original data.

- The makeup of underlying data sets. We use the HLL++ algorithm to determine the cardinality of data sets. This helps intelligently plan where pre-aggregation might be beneficial.

- User behavior. AtScale collects statistics on what users are querying. Frequent queries indicate the benefit of an aggregate.

- Cloud infrastructure. AtScale enables users to define preferred locations for storing acceleration structures. If there are performance or cost benefits of storing aggregates on a separate data platform other than the source data, AtScale will autonomously choose the best and most cost effective storage location.

Taken together, AtScale is able to deliver a truly autonomous approach to optimize analytics performance by managing data aggregations that go beyond the capabilities of simplistic materialized views.

Last Mile Analytics Query Acceleration

In addition to our dynamic implementation of acceleration structures, AtScale’s integration with common BI platforms delivers an important bump to query performance as perceived by end users. As data analysts refresh a report, activate a filter or slicer, or drill into a metric in their BI dashboard, their tool generates data platform-specific queries. For live cloud connections, queries are translated into the flavor of SQL preferred by the data platform. AtScale is designed to optimize common BI tool queries (including the MDX generated by Excel Pivot Tables, DAX from PowerBI, and SQL from Tableau). This ability to manage query traffic in the language of the BI tool and dynamically decide whether to route queries to an existing aggregate table or to the atomic source data provides a seamless query experience, regardless of the size of data.

Benchmarking Analytics Query Performance

AtScale maintains a set of benchmark studies that analyze cloud data platform query performance with and without AtScale for large (10TB+) data sets. View reports here.

SHARE

The Practical Guide to Using a Semantic Layer for Data & Analytics